About

I am a machine learning researcher and engineer completing an M.S. in Computer Science at the University of Southern California, with a background in Computer Engineering from Shahid Beheshti University.

My work spans deep learning theory, bioinformatics, and medical AI, with a focus on multiview and multimodal learning, generative and probabilistic models, and graph neural networks — leading to peer-reviewed publications in Nature Communications (2025) and IEEE Access (2024).

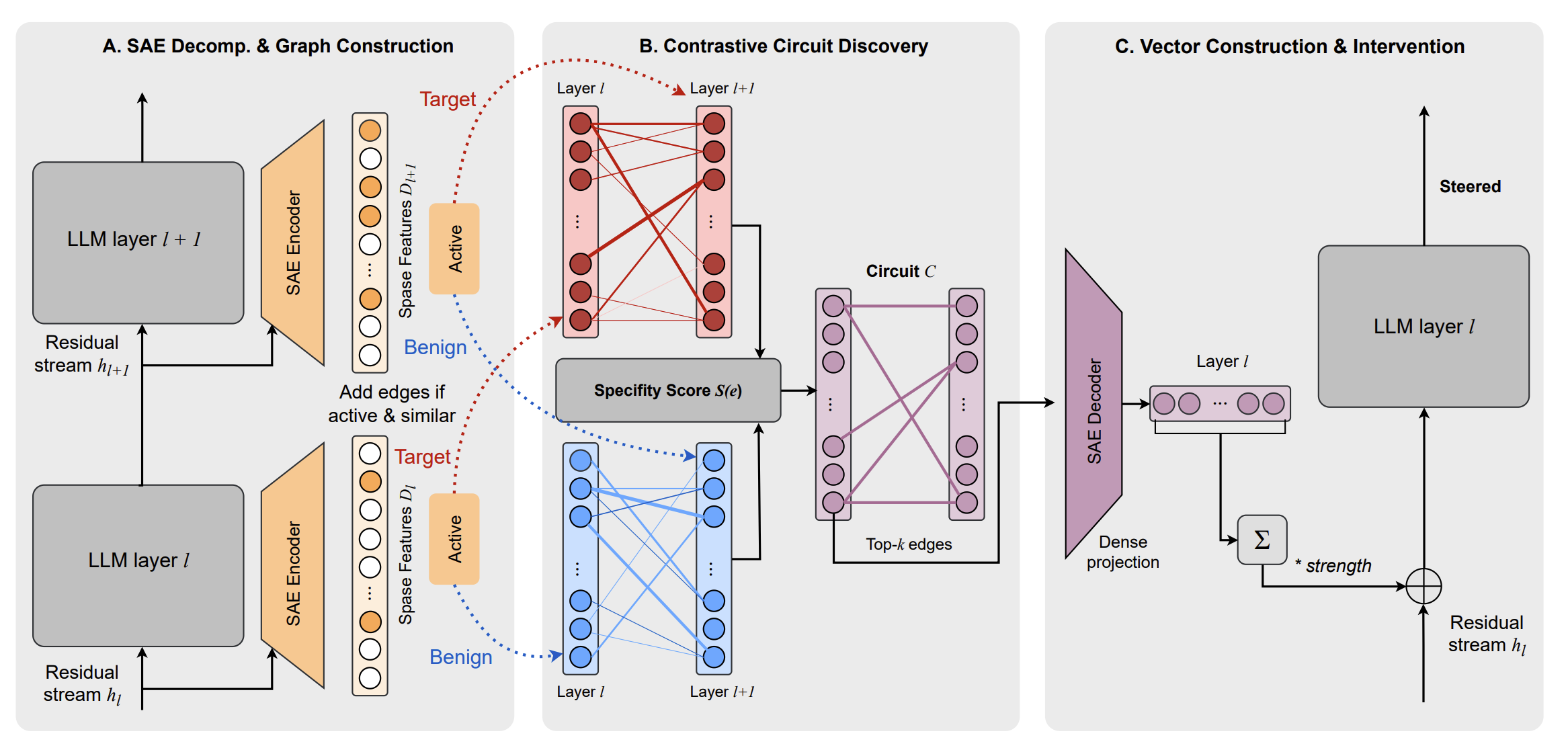

More recently I have concentrated on large language models, particularly alignment, safety, and mechanistic interpretability. My ongoing work explores circuit-level understanding and behavioral steering of LLMs, including multi-layer steering methods inspired by transformer circuit discovery.

In parallel, I build end-to-end ML systems across multimodal RAG, LLM evaluation, and deep research agent pipelines. I am particularly interested in bridging theory and real-world systems — taking insights from representation learning and interpretability into practical, scalable AI.

Education

Technologies

Research & Publications

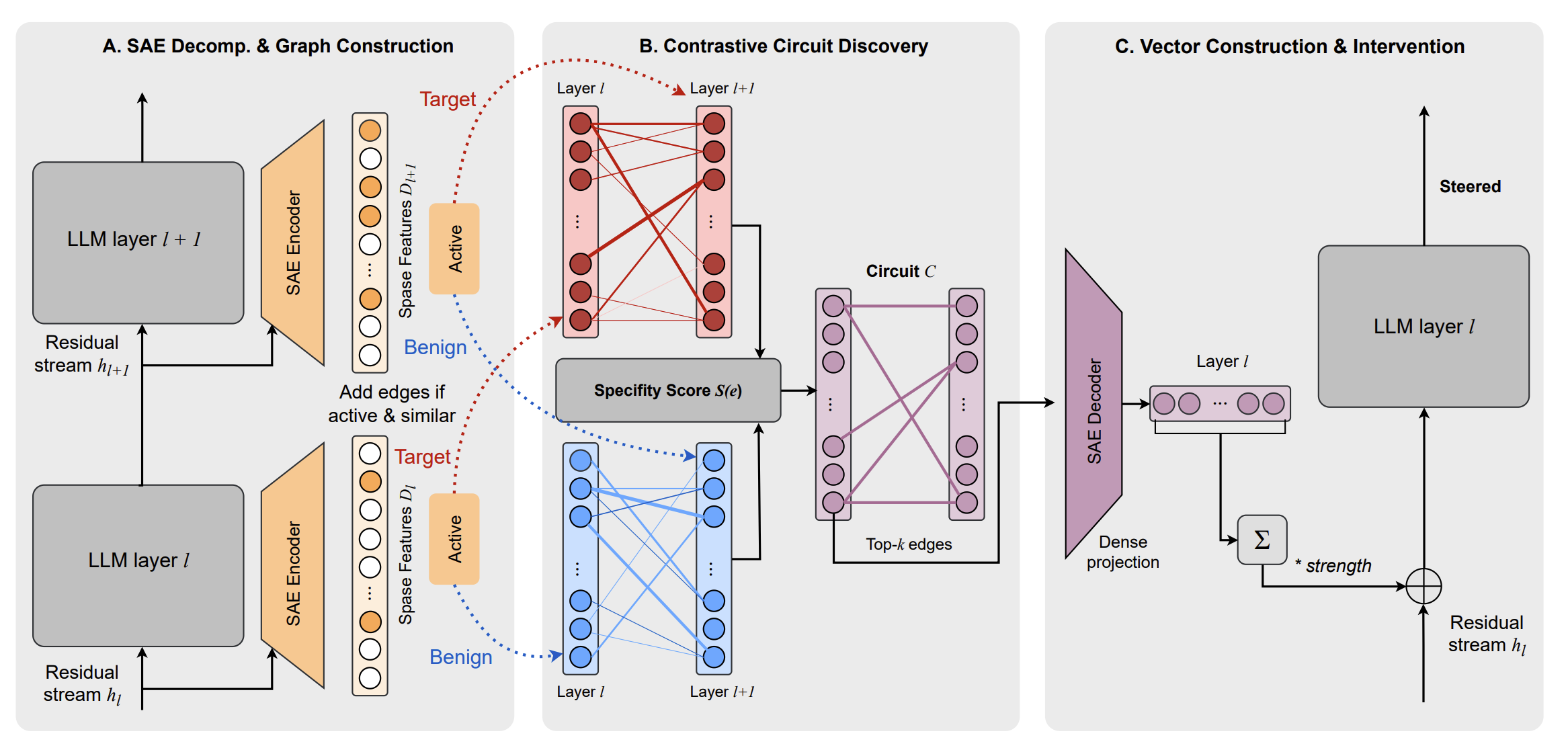

Multi-organ metabolome biological age & cardiometabolic risk

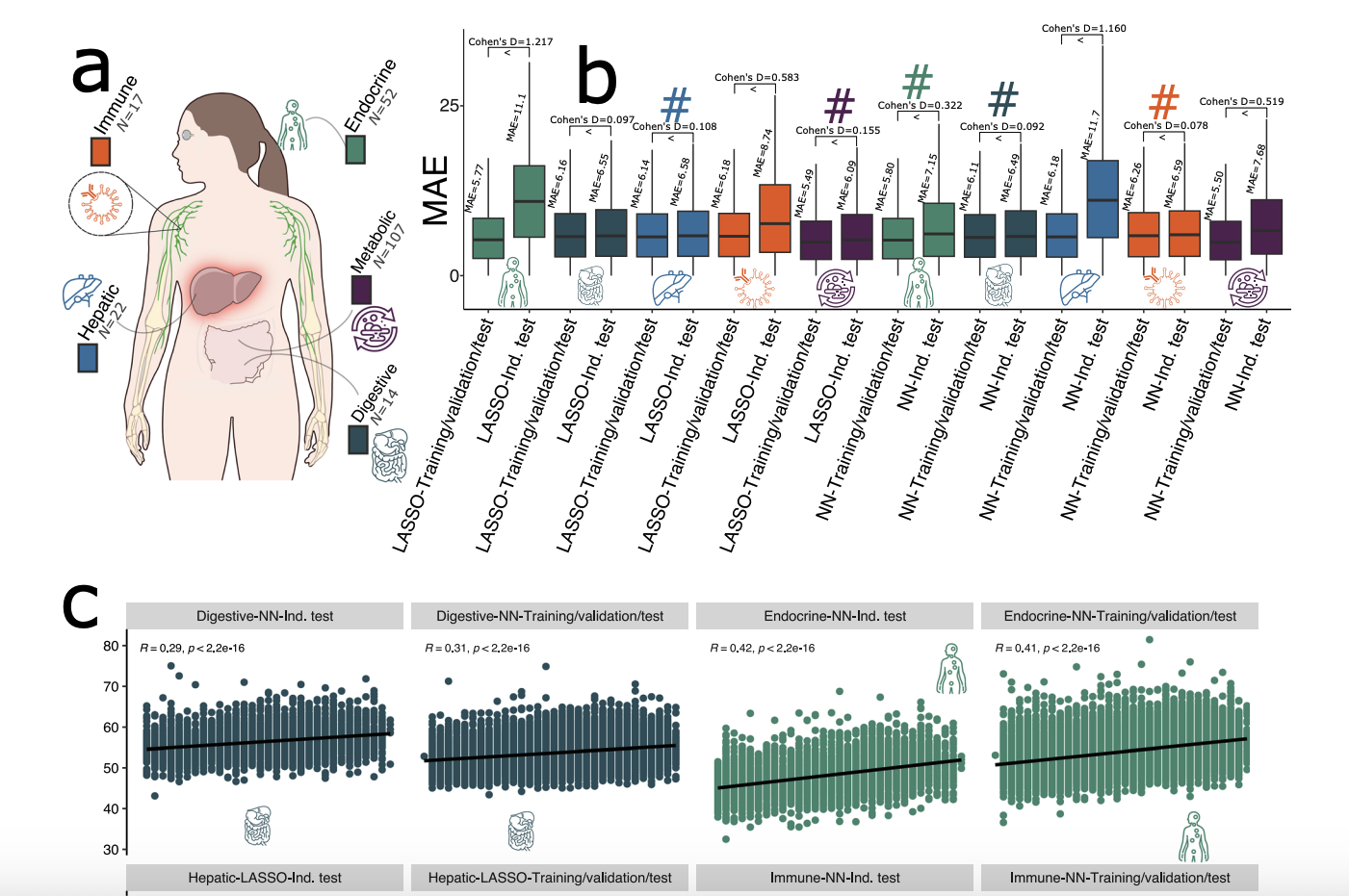

EvoGLAD: Evolving Graph-Level Representations for Anomaly Detection in Metagenomic Communities

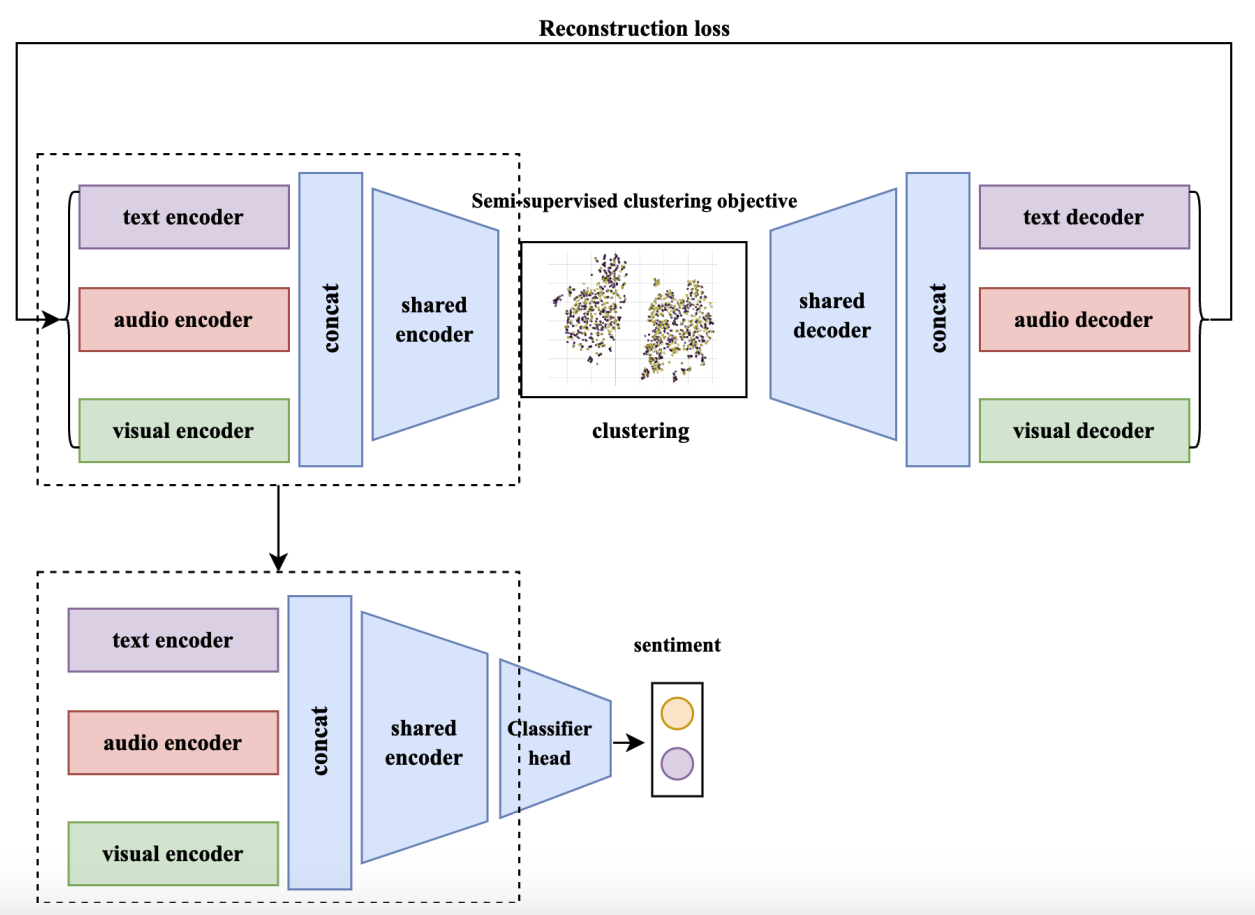

Enhancing Multi-Modal Video Sentiment Classification Through Semi-Supervised Clustering

Experience

Graph representations for metagenomic communities (METAGENE-1). Developing EvoGLAD, a framework for graph-level anomaly detection in microbial ecology using evolutionary graph neural networks.

Disease subtype discovery via weakly supervised deep clustering. Collaborating on multi-organ metabolomics research published in Nature Communications, applying multiview learning to large-scale biobank data.

Developing a novel LLM-based approach for deep academic research. Building end-to-end pipelines combining multimodal RAG, LLM evaluation and benchmarking, and agentic workflows for scientific discovery.